In early maritime trade, merchants would avoid a king’s tax by docking a few miles further along the coast under a different jurisdiction. Today, the “cargo” refers to code and computation, while the “coastline” is a complex patchwork of international treaties, national laws and industry self-regulation. We are entering an era of AI governance arbitrage, in which the pace of innovation hinges more on how well actors navigate regulatory gaps than on technological breakthroughs.

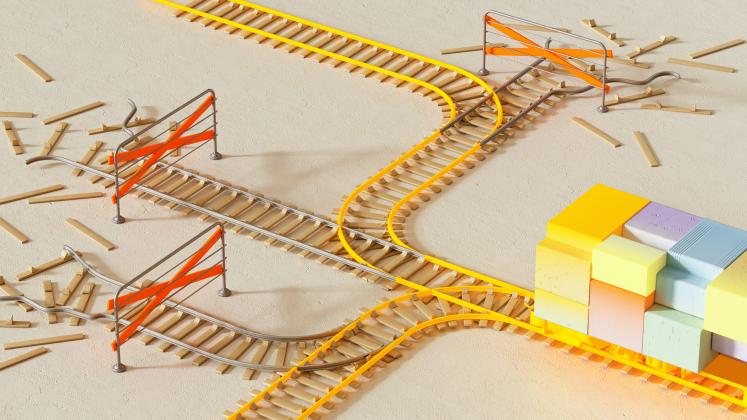

The global AI scene is increasingly splitting into three separate layers: international frameworks, national rules and industry standards. These layers often develop on their own and sometimes do not fit well together. Without ways to coordinate them, the world risks a regulatory race to the bottom, compromising safety, trust and long-term value for quick wins and short-term gains.

The implications are worldwide. Fragmented governance could distort competition, weaken international trust in AI systems and lead to uneven technological development between countries that set the rules and those that must follow them.

The three-layer collision

Friction in AI governance occurs because these three layers function at different speeds and follow different philosophies.

At the international level, institutions serve as conveners and norm-setters. Organizations like the United Nations, the G20 and the G77 help shape global standards and shared principles around safety, human rights and development. The UN provides legitimacy and universality; the G20 offers political influence and economic coordination among major economies; and the G77 represents the collective voice of developing countries.

While these institutions are crucial for shaping global norms and values, their frameworks often serve as guiding principles rather than legally enforceable rules. Their influence stems from coordination and legitimacy, not enforcement.

The national layer functions through domestic law and strategic policy. Governments increasingly see AI as both an economic engine and a matter of national security. The European Union’s AI Act, now moving into its high-risk implementation phase, stands as one of the most comprehensive regulatory frameworks so far. The United States has adopted a more flexible approach focused on maintaining innovation. China incorporates AI governance into its broader framework of state oversight and technological growth.

These different approaches mirror various political systems, economic priorities and societal values. However, they also produce an uneven regulatory environment that companies and developers must navigate.

The industry and standards evolve the fastest. Technology companies and organizations such as the International Organization for Standardization (ISO) and the Institute of Electrical and Electronics Engineers are increasingly influencing governance through technical standards, safety protocols and platform regulations.

In practice, an update on GitHub or a safety protocol adopted by a major laboratory can influence global development more quickly than a treaty negotiated over many years. This makes participation in technical standards organizations critically important. If such institutions lack broad global representation, technical norms risk reflecting the priorities of only a few actors rather than the interests of the wider international community.

The real cost of fragmentation

This multi-layered system encourages regulatory arbitrage. When developers see safety audits or compliance requirements in one jurisdiction as too burdensome, they do not necessarily stop innovation. Instead, they might move development to jurisdictions with fewer regulatory restrictions.

This dynamic goes beyond normal regulatory competition. It can lead to situations where avoiding safety rules becomes a competitive edge. If one country demands strict stress-testing of advanced AI models while another has lax regulations, the safest option for developers might also be the most risky for society.

Fragmentation also has economic consequences. Large multinational technology firms can afford teams of lawyers and compliance specialists capable of navigating dozens of regulatory regimes. Smaller companies and startups often cannot. As a result, regulatory complexity can unintentionally reinforce the dominance of already powerful firms.

There is also a wider geopolitical concern. If governance frameworks stay fragmented, global trust in AI systems might decline. Countries could become hesitant to adopt technologies developed under regulatory environments they do not fully trust, thereby slowing international innovation and cooperation.

Towards regulatory interoperability

A single global AI regulator is unlikely to form. The geopolitical significance of AI ensures that national sovereignty will remain central to governance. What is feasible, however, is regulatory interoperability, frameworks that enable different regulatory systems to work together.

The global economy has experienced similar coordination in the past. International trade became more efficient through the standardization of shipping containers, and financial regulation partially aligned with the Basel Accords. Both developments depended on multilateral institutions to establish the trust and technical infrastructure needed for convergence.

AI needs a similar approach — a common global language of risk and safety.

If an AI system meets strong, trustworthy safety standards within an accepted technical framework, developed by inclusive organizations like ISO and supported by international political platforms such as the G20 and the United Nations, such certification should be recognized across different jurisdictions.

Three shifts are particularly important.

First, governments should work towards mutual recognition of core safety standards. Through cooperation within organizations such as the UN and the G20, countries can develop shared definitions of unacceptable AI risks.

Second, national legal frameworks should align with international technical standards, particularly those developed by organizations such as ISO. This enables countries with limited regulatory capacity to implement trustworthy governance systems without developing complex regulatory structures themselves.

Third, transparency should be a requirement for market access. Developers should keep verifiable “governance passports”, auditable records that detail how AI models are trained, tested, evaluated and monitored. Multilateral institutions can help develop the metrics and verification mechanisms needed to build trust across borders.

The strategic moment

AI governance is reaching a critical stage. International organizations can offer legitimacy and coordination; major economic forums can rally political support; standards organizations can develop the technical infrastructure that enables interoperability.

The world has spent years debating AI ethics. The next step is turning those principles into governance systems that work across borders.

If the regulatory fragment continues, governance could shift from a tool for ensuring consistency to a tool for exploitation. This may lead to a two-tier AI landscape: one group of countries and companies controlling technology, and another that must follow rules set by others.

Avoiding that outcome is more than just a regional issue. It is a worldwide priority.

Suggested citation: Tshilidzi Marwala. "The AI Governance Arbitrage," United Nations University, UNU Centre, 2026-03-06, https://unu.edu/article/ai-governance-arbitrage.